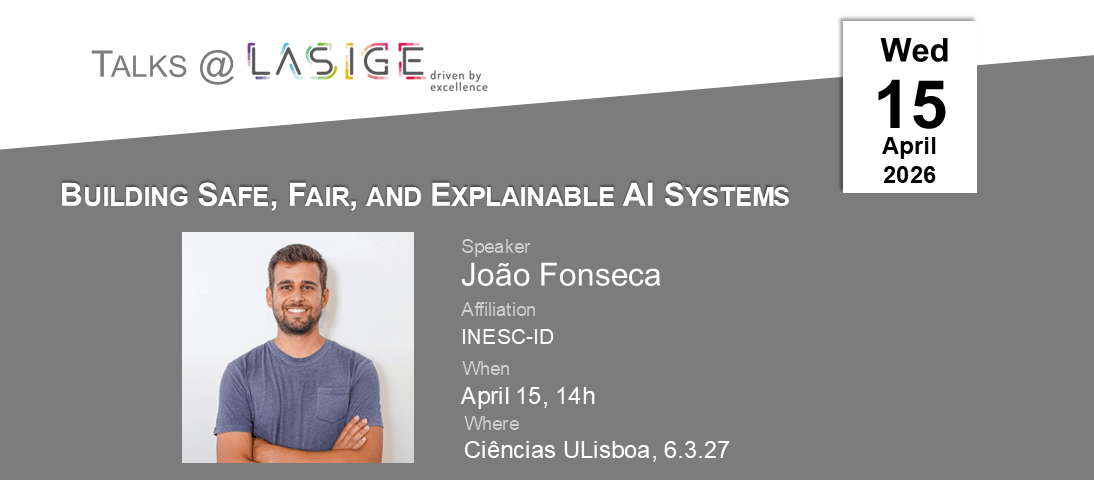

Title: Building Safe, Fair, and Explainable AI Systems

Speaker: João Fonseca (INESC-ID)

Date: April 15, 2026, 14h

Where: C6.3.27

Invited by: Cátia Pesquita

(coffee break included)

Abstract: As AI systems increasingly shape high-stakes decisions, my research develops principled methods to make them safer, fair, and more interpretable. I focus on three themes: first, ensuring reliability in large language models through real-time safeguards, hallucination detection, and educational tools for cognitive guardrails; second, addressing fairness and temporal degradation in algorithmic recourse, with methods to quantify and correct inequities; and third, advancing interpretable machine learning via Shapley-based frameworks and tabular foundation models. If time allows, I will also discuss possible future research directions. Altogether, my work seeks to make AI systems more trustworthy for real-world decisions.

Bio: João Fonseca is a Postdoctoral Fellow at INESC-ID, working with professors Paolo Romano and Rodrigo Rodrigues, and a visiting scholar at New York University, working with Professor Julia Stoyanovich at the Center for Responsible AI. His research focuses on fairness, safety, and explainability in Machine Learning and Artificial Intelligence. He holds a PhD in Information Management from NOVA Information Management School, funded by an MIT Portugal PhD grant, where he worked on synthetic data generation, imbalanced learning, and active learning for land use and land cover classification.